If you’re building robots in 2026, you already feel it in your bones: synthetic data for robotics training is no longer a “nice-to-have.” It’s becoming the default. Physical AI training data is the real choke point, not GPUs, not model architecture, not even algorithms. And once you zoom out, it’s obvious why. 3D synthetic datasets for AI, especially synthetic data for computer vision, are the quickest path to robust perception and safer deployment. In plain terms, robot training with synthetic data is how teams stop waiting on the world to behave and start shipping systems that work when it doesn’t.

Now, let’s talk about why this shift is happening, what makes it technically viable, and how Vivid 3D turns the idea into an enterprise-grade data engine.

Quick Summary. Why Physical AI Runs on Synthetic 3D Data

Training intelligent robots at scale requires more than real-world footage. It requires synthetic data.

The volume problem: Physical AI models need huge labeled corpora; real-world capture and annotation is slow, expensive, and often unsafe.

Synthetic scales. 3D simulation platforms can generate massive, automatically labeled datasets in days, not quarters.

Photorealism matters: The closer synthetic scenes look to reality, the easier it becomes to transfer learning into deployment.

Scenario variety. You can simulate lighting, clutter, rare failures, and edge cases you would never plan a real shoot for.

Cleaner iteration loops. When a model fails, you can regenerate targeted data on demand, then retrain quickly.

Enterprise pipelines win. The teams that industrialize dataset generation build a compounding advantage: faster iteration, better coverage, less risk.

From Cloud to Factory Floor. What Is Physical AI?

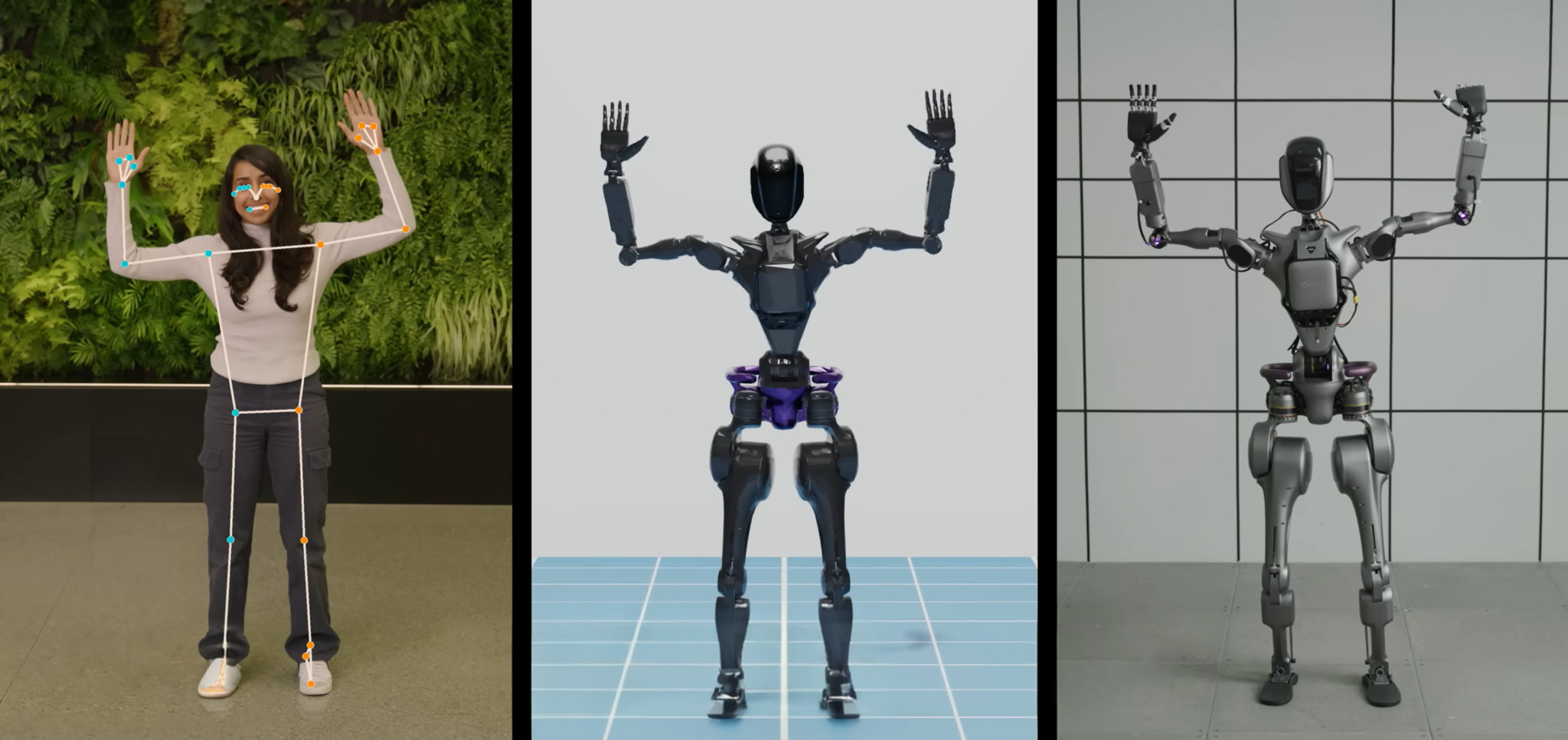

Physical AI is basically AI that doesn’t just “predict” or “classify,” but acts. It perceives the world through sensors, reasons under uncertainty, and then moves, grasps, navigates, or cooperates with humans and machines.

If that sounds like robotics, it is. Still, something has changed.

A few decades ago, robots were mostly caged, scripted, and predictable. They repeated the same weld, the same pick, the same motion path, over and over. It was brilliant engineering, but brittle. The moment the environment drifted, a pallet shifted, lighting changed, a label wrinkled, the robot either slowed to a crawl or failed.

Physical AI is the next chapter. It turns robots into adaptable systems that learn patterns from data and handle messy reality. NVIDIA’s definition captures it well: Physical AI enables autonomous systems to perceive, understand, reason, and perform complex actions in the physical world.

That’s why you’re seeing excitement across warehouses, roads, ports, factories, and inspection sites. Autonomous forklifts and pickers. Inspection drones. Mobile manipulators. Even early-stage general-purpose robots. The application list grows every quarter.

And yet, the limiting factor is not imagination. It’s data.

The Training Data Problem No One Talks About

Robotics teams don’t get to scrape the internet the way language models do. A warehouse robot doesn’t learn from blogs and PDFs. It learns from camera frames, depth signals, point clouds, and spatial labels that reflect the environment it will actually face.

So you run into three problems, fast.

First, the coverage gap. Real-world data is never enough. You might collect “normal” runs for weeks and still miss the awkward corner cases: glare on polished floors, dust on a lens, a pallet sticking out by 12 cm, a worker stepping into the path at the wrong time. Robots fail on these weird moments because they are exactly what reality serves up on a Tuesday afternoon.

Second, the labeling grind. Dense perception training usually needs bounding boxes, segmentation masks, keypoints, depth, pose, and more. Humans can label some of it, sure, but it’s slow, inconsistent, and hard to audit.

Third, the iteration tax. When models fail in the field, you need new training examples that replicate that failure mode. With real-world data, that often means redeployment, recapture, re-label, repeat. Painful.

Here’s a simple way to put it: Physical AI doesn’t just need data. It needs a data factory.

Real-World Data vs Synthetic 3D Data for Robotics Training

The punchline is uncomfortable, but true: the data gap is quietly throttling the Physical AI revolution.

Why Synthetic Data for Robotics Training Is the Answer

Synthetic data is training data produced by simulation rather than physical cameras. That’s the simple definition. The practical meaning is bigger.

In a 3D world, you can generate the same scene thousands of times with different lighting, object placement, clutter, motion patterns, camera positions, materials, and sensor noise. Then you export not just the pixels, but also perfect labels. No annotation team. No guessing. No “we disagree where the box starts.” You get consistent ground truth.

The result is a different cadence of development. You stop treating data collection like a slow expedition and start treating it like manufacturing. Model fails in dim lighting? Generate dim lighting. Model misses reflective surfaces? Add reflective materials and glare. Robot gets confused by partial occlusion? Simulate stacks, occlusions, and messy arrangements.

Simulation-to-real transfer, often called Sim2Real, is the bridge here. The gap between synthetic and real still exists, yes. But it’s manageable when you generate diverse, realistic data and validate it properly. In many teams, synthetic becomes the backbone, and real data becomes the calibration layer.

There’s also a strong industry signal. Waymo, for example, says it has driven more than 20 billion miles in simulation to explore challenging scenarios and systematically stress its system.

Same principle. Different domain. The point stands: when reality is too slow, you build a controllable proxy and learn at scale.

What Makes 3D Synthetic Data Work. The Technical Requirements

The market is full of “synthetic data” claims. Some are fluff. The difference between toy datasets and production-grade training corpora comes down to a few requirements that sound boring, but absolutely make or break outcomes.

Photorealism

If your renders look like a video game from 2012, models learn the wrong cues. They overfit to synthetic artifacts and then stumble in the field. High-quality rendering with physically based materials, believable lighting, and correct shadows reduces that mismatch.

Photorealism is not a magic spell, though. It’s a baseline. The real value shows up when photorealism is paired with systematic variation.

Scene Variability and Randomization

Robots fail on the long tail. That’s the whole story. So the dataset must include controlled randomness: shifting object positions, rotating SKUs, changing textures, altering illumination, injecting clutter, introducing occlusions, and mixing “clean” and “dirty” environments.

In practice, this becomes a design problem. You’re not just generating images. You’re designing distributions.

Multi-Perspective and Multi-Sensor Support

Many real robots don’t see like smartphones. They have multiple cameras, depth sensors, LiDAR, sometimes thermal or IR. Training on RGB alone may work for demos, then collapse in real operations.

A modern synthetic pipeline should output multiple modalities from the same scenario, aligned in time and geometry. That unlocks better fusion models, better calibration, and better validation.

Scale and Automation

Hand-building synthetic datasets defeats the purpose. You want a system where generating 100,000 or 1,000,000 frames is not a heroic effort. It’s a repeatable job with versioning, metadata, and export formats your ML stack can consume.

This is the difference between “a simulation” and “a data engine.”

Synthetic Outputs That Matter for Robot Perception

Vivid 3D supports multi-modal synthetic data, automatic ground truth, and large-scale exports, which is exactly what this requirement set implies.

How Vivid 3D Powers Physical AI Training Data at Scale

Here’s the part many teams underestimate: synthetic data isn’t only about rendering. The hard part is operationalizing it. Dataset versioning. Provenance. Scenario packs. Automation. Integration into MLOps. And the ability to regenerate data when the world changes.

Vivid 3D is built as a Visual Data Platform that treats 3D assets and datasets as first-class, managed artifacts. It’s not a loose set of tools. It’s a pipeline.

A few platform capabilities matter specifically for Physical AI:

Vivid 3D can generate synthetic datasets on demand, scaling to large volumes of images, video, or point clouds, with automatic labels like bounding boxes, masks, keypoints, 6D pose, and optical flow. It also supports standard dataset formats such as COCO, KITTI, and YOLO, plus custom formats, and integrates through API for MLOps pipelines.

Vivid.Studio gives teams a practical environment builder, a game-style editor for assembling production-ready scenes and layouts. That matters because environment creation is usually the hidden bottleneck in synthetic programs.

Vivid.Simulation Generator extends this with simulation-oriented outputs, making it easier to produce scenario variants and export simulation-ready results without babysitting complex setups.

What I like about this approach is the lifecycle thinking. Create content, generate datasets, train models, deploy or export, then test with new synthetic cases. That loop is explicit in Vivid 3D’s product framing.

That loop is how Physical AI teams move fast without breaking things.

Real-World Use Cases. Where Synthetic Data Meets Physical AI

Let’s keep this grounded. Where does synthetic data pay off first? In my view, it’s wherever the environment is repetitive enough to simulate, but chaotic enough to cause costly failures.

Warehouse and logistics automation is a classic case. Navigation, shelf interaction, pallet detection, human avoidance, product recognition. You can simulate aisle widths, varying light temperatures, reflections, partial occlusions, and the kind of clutter operations teams swear they will “clean up next week.” The robot still has to handle it today.

Industrial autonomous vehicles in controlled sites come next: ports, mines, large campuses. You can stress-test driving policies and perception modules with weather, dust, dynamic obstacles, and traffic patterns without waiting for nature to cooperate.

Inspection drones are also a strong fit. The camera viewpoint is dynamic, the backgrounds are messy, and you want coverage across angles that humans do not enjoy capturing. Synthetic helps you build defect catalogs without staging dangerous climbs or shutdowns.

Robotic manufacturing is another winner, especially for pick-and-place and defect detection. Once you have high-fidelity 3D assets, synthetic lets you generate “infinite” variability of parts orientation, lighting, and surface imperfections. It’s faster than blocking a production line for data collection.

A subtle but important extra benefit is governance. In many regulated or safety-critical deployments, you need to explain what you trained on and why. Article 10 of the EU AI Act sets expectations around data governance, representativeness, error reduction, and documentation for training, validation, and testing datasets in high-risk systems.

Synthetic pipelines, when done properly, can actually make compliance easier because the data has lineage by design.

The Competitive Advantage. Why Speed to Data Wins

Here’s the uncomfortable truth: most Physical AI teams are not limited by cleverness. They are limited by iteration speed.

The organizations that win will not be the ones who write the most beautiful model code. They’ll be the ones who can run the tightest loop:

Observe failure, create targeted data, retrain, validate, redeploy.

Real-world data collection stretches that loop. It adds weeks. Sometimes months. Synthetic data compresses it into days, sometimes hours, especially when scenario generation is automated and integrated.

Also, synthetic changes the economics of exploration. You can afford to test more hypotheses. You can afford to model rare events. You can afford to treat robustness like a product requirement, not a wish.

Over time, that compounds. Your dataset becomes an asset. Your scenario library becomes institutional knowledge. Your team develops a feel for coverage: where your models are blind, what confuses them, what needs more variation.

In other words, you stop guessing. You start engineering.

Conclusion. The Intelligence Age Needs a Data Engine

Physical AI is not waiting in the wings. It’s already reshaping logistics, manufacturing, inspection, and autonomy. The constraint is not compute. The constraint is not even model size. It’s the reality that robots need massive, structured, labeled visual data to learn safely.

Synthetic 3D data is the practical solution because it turns training data into something you can manufacture, version, audit, and improve. It’s a calmer way to build systems that must operate in chaotic places.

Vivid 3D is positioned around that exact need: a full lifecycle visual data platform that connects 3D content creation, scenario design, synthetic dataset generation, and model training workflows into one operational loop.

If you’re building Physical AI, the question is no longer whether you’ll use synthetic data. It’s whether you’ll treat it as a side project or as core infrastructure.

.png)